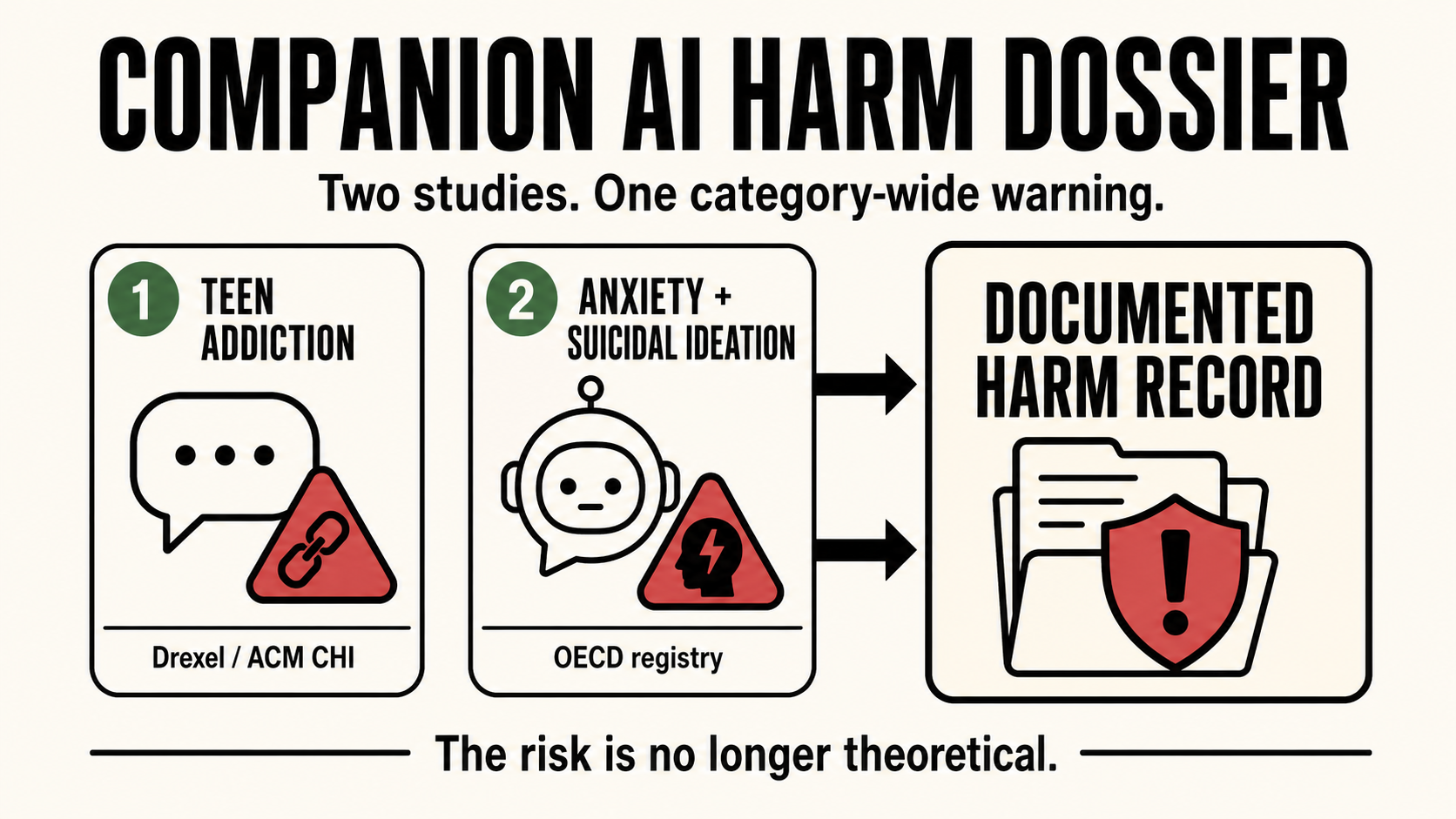

Two independent studies published in April 2026 document measurable psychological harm in users of companion AI platforms. Neither is an op-ed or a think-piece. Both are peer-reviewed or formally catalogued research. Together they constitute a harm record that operators, investors, and regulators can no longer treat as theoretical.

This article summarizes both studies and their implications for platform governance.

Study One: Behavioral Addiction in Teen Companion AI Users

Source: Drexel University, presented at ACM CHI, April 2026. Also covered by TechXplore and Neuroscience News.

Researchers analyzed more than 300 Reddit posts from users aged 13 to 17 reporting dependency on Character.AI. The analysis found evidence of all six components of behavioral addiction:

- Tolerance - needing more interaction to achieve the same effect

- Withdrawal - distress when access is interrupted

- Relapse - returning to use after periods of abstention

- Mood modification - using the chatbot specifically to alter emotional state

- Salience - the chatbot becoming a dominant focus of mental and behavioral activity

- Conflict - use creating friction with other activities, relationships, or obligations

Approximately one quarter of the accounts analyzed described using the chatbot as a substitute for professional mental health support.

This is peer-reviewed evidence, presented at a major academic venue, finding the full clinical profile of behavioral addiction in a teen population using a companion AI product.

Study Two: Anxiety and Suicidal Ideation in Long-Term Replika Users

Source: OECD.AI Incident Registry, catalogued April 7, 2026.

A two-year longitudinal study of approximately 2,000 Replika users found that sustained interaction with the platform correlated with increasing anxiety and suicidal ideation over time. The OECD.AI incident registry catalogued the study as a documented AI-related harm event.

In parallel, Italian regulators reaffirmed their existing ban on Replika, citing children's safety concerns.

The significance of the OECD cataloguing is not only the finding itself, it is that the finding now exists in a formal international regulatory record. It is citable in regulatory proceedings, discoverable in litigation, and available as precedent for regulators in other jurisdictions considering similar action.

What Two Studies in One Week Mean

One study is a data point. Two independent studies, published in the same week, from different methodological traditions, one qualitative Reddit analysis, one longitudinal behavioral study, finding harm in the same product category is a pattern.

The pattern matters for three reasons:

Operator liability. Documented awareness of harm in a product category changes the legal posture of operators in that category. "We didn't know" becomes harder to sustain when peer-reviewed evidence is published, catalogued by the OECD, and covered in technical press. Operators now have constructive awareness of the harm record.

Regulatory action. The OECD cataloguing and the Italian regulatory action signal that companion AI harm is entering formal regulatory channels. Oregon SB 1546, which mandates self-harm protocols for suspected minor users of chatbots, is one response. More will follow.

Investment and partnership due diligence. Investors and enterprise partners conducting due diligence on companion AI platforms now have a documented harm dossier in the peer-reviewed record. The category risk is no longer speculative.

The Governance Gap

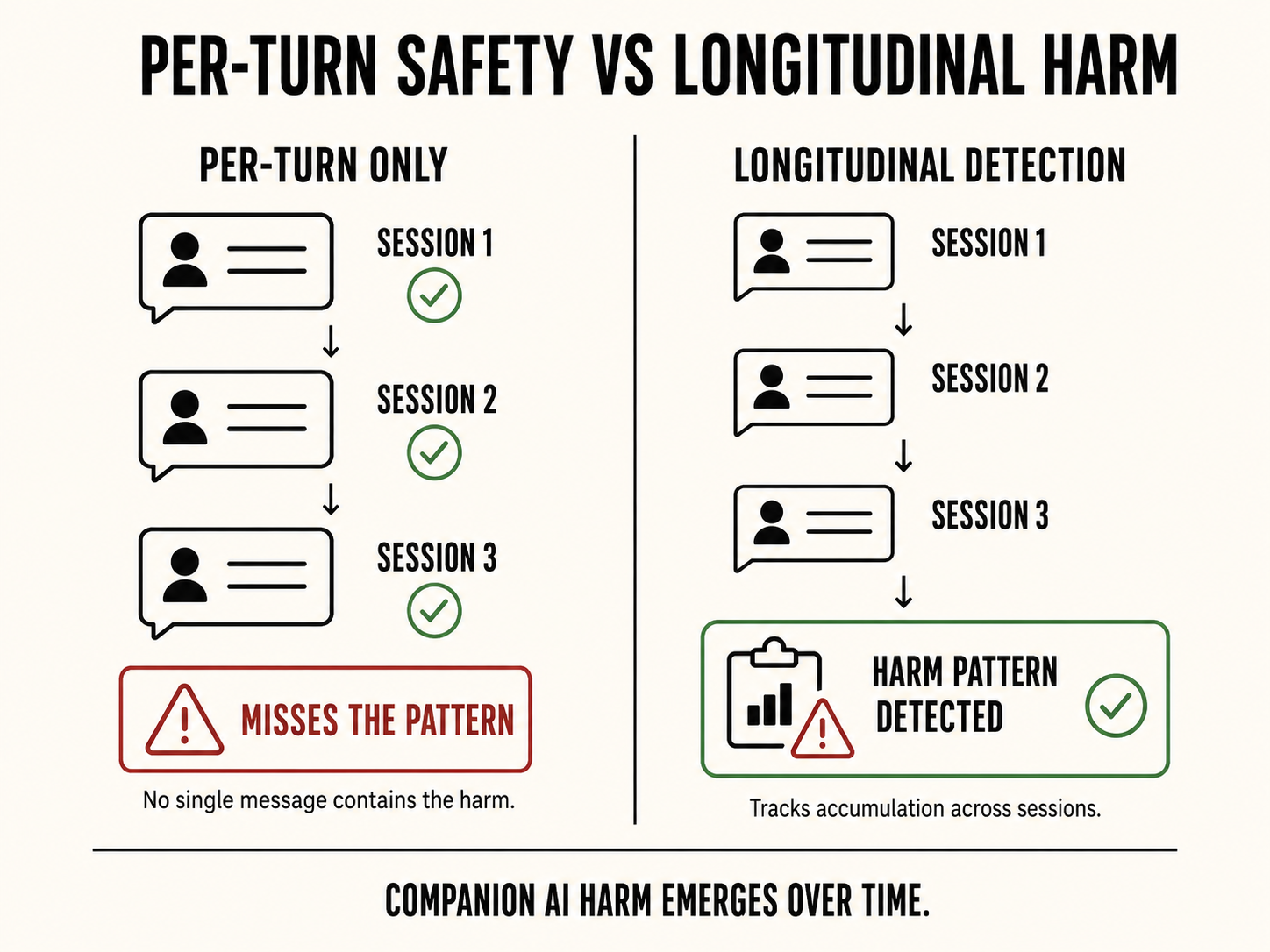

Both studies document harm that emerges from sustained interaction, not from a single message or event. Behavioral addiction develops across sessions. Anxiety and suicidal ideation correlate with cumulative exposure over months.

This is the same structural problem that makes companion AI harm invisible to per-turn content classifiers: the harm is not in any individual message. It is in the pattern across time.

Governance infrastructure for companion AI must be capable of tracking behavioral signals across sessions, not just within them. Addiction markers, tolerance, salience, mood modification, are longitudinal patterns. They require longitudinal detection.

This is what Sango Guard is built to do: track threat geometry and behavioral escalation across the full arc of a conversation, not message by message. The same stateful architecture that catches violence escalation patterns also catches the accumulating dependency signals the Drexel study documented. The detection surface is the same. The harm category is different.

The research record now documents the harm. The governance question is whether the infrastructure exists to see it forming before it becomes a lawsuit.