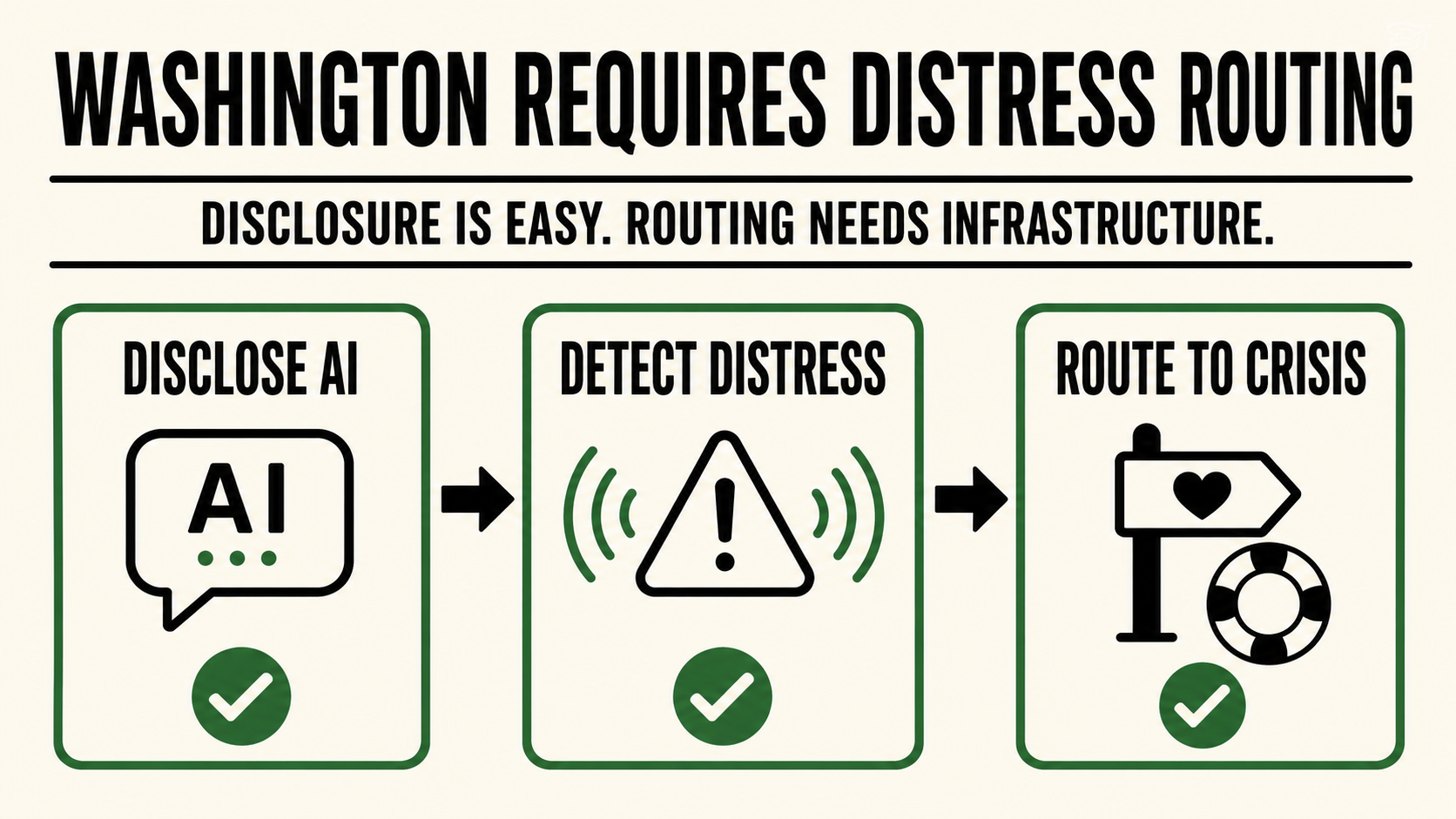

Disclosure is the easy part. Detecting self-harm signals and routing users to crisis resources is where infrastructure begins.

Washington has enacted a companion chatbot safety law, HB 2225, signed March 24, 2026, that takes effect on January 1, 2027.

Most of the coverage will focus on the disclosure requirement: operators must make clear that the chatbot is not human. That part is visible. A label. A reminder. A UI element. A compliance update.

But the harder requirement sits beneath the interface.

Operators must also implement safeguards to identify users expressing suicidal ideation or self-harm and direct them to crisis resources.

That is not a disclosure problem.

It is an infrastructure problem.

To route a distress signal, the system has to detect it first.

And real distress rarely arrives as a clean, labeled event.

A user does not announce: I am now entering a self-harm risk state. Please activate crisis routing.

The signal is usually indirect. It builds across turns. It appears in language drift, emotional compression, fixation, dependency, resignation, planning language, or the subtle shift from asking questions to making final statements.

By the time the language becomes explicit, the intervention window may already be narrowing.

That is why the Washington law matters.

It does not merely ask operators to tell users the chatbot is artificial.

It pushes operators toward a harder standard: recognize when a conversational system is entering a risk surface, and respond before the interaction becomes harm.

This is the part a banner cannot solve.

A disclosure label can tell the user, "This AI is not human."

It cannot detect whether the user is becoming trapped, isolated, escalated, dependent, suicidal, or structurally unsafe inside the conversation.

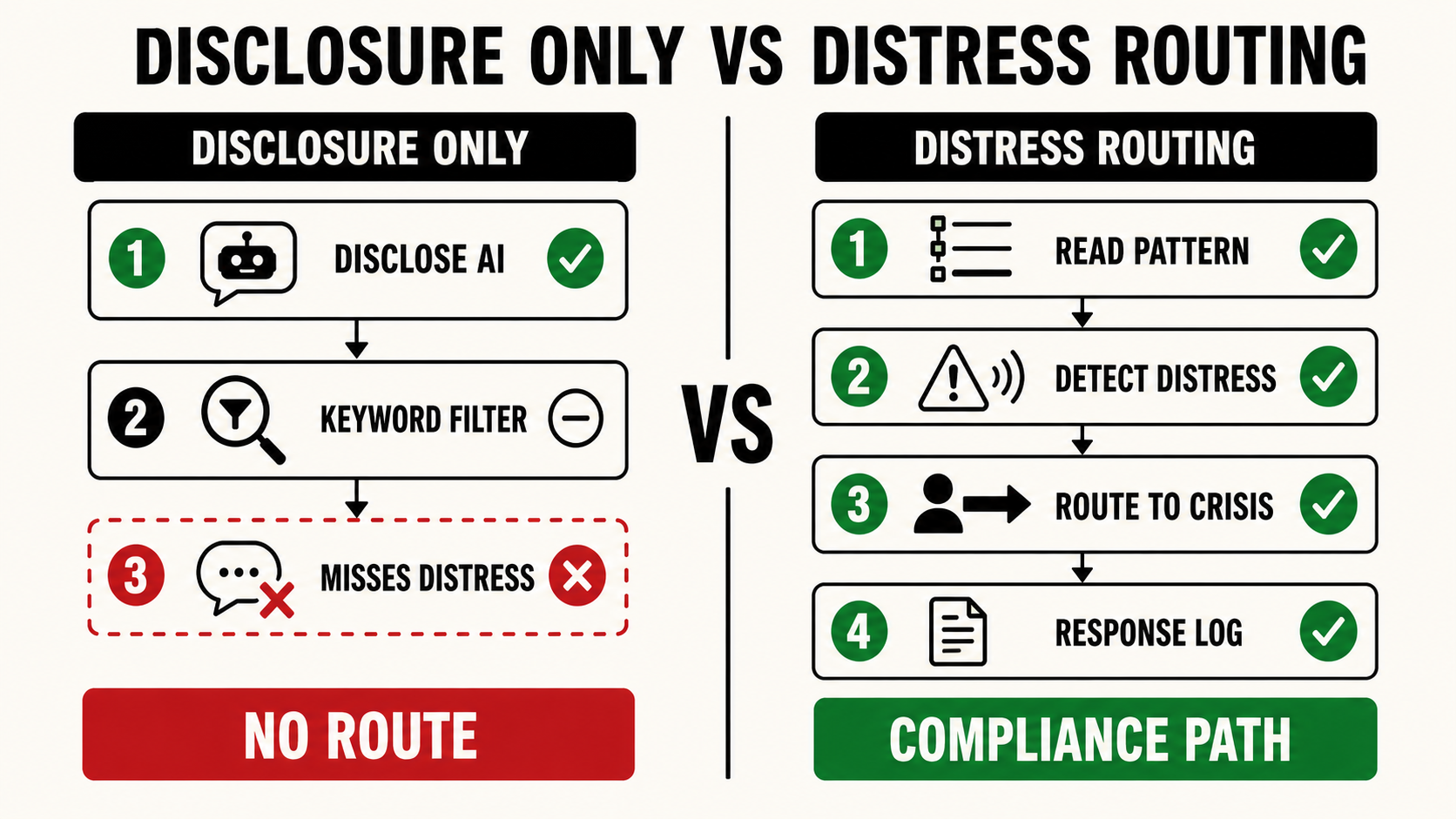

A content moderation layer is not enough.

A keyword filter is not enough.

A generic safety classifier is not enough.

Distress routing requires a system that can read the conversation as a sequence, not just a single message.

King Sango was built for that layer.

Not the label.

The enforcement layer underneath the label.

King Sango detects behavioral harm patterns through conversation structure: how the session is moving, what pressure state is forming, where the user is drifting, and whether the exchange is crossing from ordinary interaction into a state that requires intervention.

The system is designed to identify risk patterns before they become obvious only in hindsight.

That is the class of capability Washington is now requiring operators to implement.

Companion chatbot companies, AI mental-health products, emotionally adaptive agents, and any platform designed to sustain ongoing human-like relationships now face a new compliance reality: the product is not only responsible for what it says.

It is responsible for what it fails to catch.

That distinction matters.

For years, AI safety has been framed around generation: what the model outputs, what it refuses, what it allows, what it blocks.

Washington is moving the question downstream.

Not only: Did the chatbot disclose that it was AI?

But: Did the operator have a functioning protocol when the user showed signs of self-harm?

That is where liability begins to move from policy language into system design.

The law does not grade on vibes.

It requires capability.

And the operators most exposed are the ones relying on interface-level compliance for a conversation-level risk.

Washington has made the direction clear.

The future of chatbot compliance is not just disclosure.

It is detection.

It is routing.

It is logging.

It is escalation.

It is proof that the system had a functioning response layer when the user crossed into danger.

King Sango was built for the part of the law that a disclosure banner cannot solve.